I write about technology at theluddite.org

- 3 Posts

- 127 Comments

313·11 months ago

313·11 months agoThis is a problem for the whole internet. I’ve made a long version of my argument here, but tl;dr as companies clutter the internet with cheaper and cheaper mass produced content, the valuable places will also get ruined. There’s an analogy to our physical world: Because we build cheap and ugly cities that roughly look the same, the few places that are beautiful and unique are also ruined, because they’re just too valuable; everyone wants to go there. I think that we’re already seeing beginning, with pre-existing companies like Reddit that have high quality human-generated content walling themselves off more and more as that content becomes more valuable.

7·11 months ago

7·11 months agoYeah that’s a great point! Taxis also drive different kinds of miles than typical human drivers, who probably normally drive at rush hour when it’s more dangerous whereas I’d expect taxis to have disproportionately more miles during safer times.

241·11 months ago

241·11 months agoIf those same miles had been driven by typical human drivers in the same cities, we would have expected around 13 injury crashes.

I’m going to set aside my distrust at self reported safety statistics from tech companies for a sec to say two things:

First, I don’t think that’s the right comparison. You need to compare them to taxis.

Second, we need to know how often waymos employees intervene. From the NYT, cruise employed 1.5 staff-members per car, intervening to assist these not-so-self driving vehicles every 2.5 to 5 miles, making them actually less autonomous than regular cars.

7·1 year ago

7·1 year agoMy beloved internet friend, thank you so, so much. I like duolingo for expanding my vocabulary, but the infantilizing gamification drives me nuts, to the point where when I run out of hearts, I just don’t use it for weeks. This little trick will make it so that I actually use it!

14·1 year ago

14·1 year agoMost journalists are hopelessly addicted to Twitter. Microblogging is already designed to be addictive, but journalists’ entire careers hinge on how much engagement they get, so those little engagement-rewards hit hard. They’re going to keep writing about the platform until they’re forced to quit it because it’s the main thing that they use to interact with the world. Tto them, every twitter change is fucking earth shattering.

It’s really crazy how much the people who inform the rest of us about the world have had their own reality warped by the platform.

2·1 year ago

2·1 year agoHaha no that’s not complaining; it’s good feedback! I’ve been meaning to do that for a while but I’ll bump it up my priorities.

24·1 year ago

24·1 year agoThanks! There are tons of these studies, and they all drive me nuts because they’re just ontologically flawed. Reading them makes me understand why my school forced me to take philosophy and STS classes when I got my science degree.

102·1 year ago

102·1 year agoRegardless of their conclusions, their methodology is still fundamentally flawed. If the coin-flipping experiment concluded that coin flips are a bad way to make health care decisions, it would still be bad science, even if that’s the right answer.

277·1 year ago

277·1 year agoYou can’t use an LLM this way in the real world. It’s not possible to make an LLM trade stocks by itself. Real human beings need to be involved. Stock brokers have to do mandatory regulatory trainings, and get licenses and fill out forms, and incorporate businesses, and get insurance, and do a bunch of human shit. There is no code you could write that would get ChatGPT liability insurance. All that is just the stock trading – we haven’t even discussed how an LLM would receive insider trading tips on its own. How would that even happen?

If you were to do this in the real world, you’d need a human being to set up a ton of stuff. That person is responsible for making sure it follows the rules, just like they are for any other computer system.

On top of that, you don’t need to do this research to understand that you should not let LLMs make decisions like this. You wouldn’t even let low-level employees make decisions like this! Like I said, we know how LLMs work, and that’s enough. For example, you don’t need to do an experiment to decide if flipping coins is a good way to determine whether or not you should give someone healthcare, because the coin-flipping mechanism is well understood, and the mechanism by which it works is not suitable to healthcare decisions. LLMs are more complicated than coin flips, but we still understand the underlying mechanism well enough to know that this isn’t a proper use for it.

1364·1 year ago

1364·1 year agoThis is bad science at a very fundamental level.

Concretely, we deploy GPT-4 as an agent in a realistic, simulated environment, where it assumes the role of an autonomous stock trading agent. Within this environment, the model obtains an insider tip about a lucrative stock trade and acts upon it despite knowing that insider trading is disapproved of by company management.

I’ve written about basically this before, but what this study actually did is that the researchers collapsed an extremely complex human situation into generating some text, and then reinterpreted the LLM’s generated text as the LLM having taken an action in the real world, which is a ridiculous thing to do, because we know how LLMs work. They have no will. They are not AIs. It doesn’t obtain tips or act upon them – it generates text based on previous text. That’s it. There’s no need to put a black box around it and treat it like it’s human while at the same time condensing human tasks into a game that LLMs can play and then pretending like those two things can reasonably coexist as concepts.

To our knowledge, this is the first demonstration of Large Language Models trained to be helpful, harmless, and honest, strategically deceiving their users in a realistic situation without direct instructions or training for deception.

Part of being a good scientist is studying things that mean something. There’s no formula for that. You can do a rigorous and very serious experiment figuring out how may cotton balls the average person can shove up their ass. As far as I know, you’d be the first person to study that, but it’s a stupid thing to study.

81·1 year ago

81·1 year agoI think that with these new kinds of stories, this sort of thing is super obvious because we haven’t gotten used to it and because they haven’t developed the more subtle vocabulary like officer involved shooting or how israelis are killed but Palestinians just die or how it’s always the strikers threatening the economy and never the bosses or unfair working conditions.

I don’t think anyone does this on purpose, mind you, but it’s the system evolving to suit it’s needs, as Chomsky pointed out.

2·1 year ago

2·1 year agoMy favorites, though it’s really hard to choose:

https://solar.lowtechmagazine.com/2015/12/fruit-walls-urban-farming-in-the-1600s/

3·1 year ago

3·1 year agoIf you don’t already know about it, I think you’ll like low tech magazine. It’s basically an entire website saying what you just said for over ten years now.

5·1 year ago

5·1 year agoNot if you’re too busy between your two jobs manually training the LLM models and supervising the supposedly autonomous cars to make rent.

6·1 year ago

6·1 year agoOh huh TIL. I also looked it up, and it seems like a real doozy of a word. I had no idea. Looks some some dictionaries say that the two words are interchangeable, whereas others distinguish between them, and in the latter case, I used the wrong one. Language is fun!

7·1 year ago

7·1 year agoYeah I agree. That’s why I said it was their ostensive goal. Their actual goal has only ever been profit.

92·1 year ago

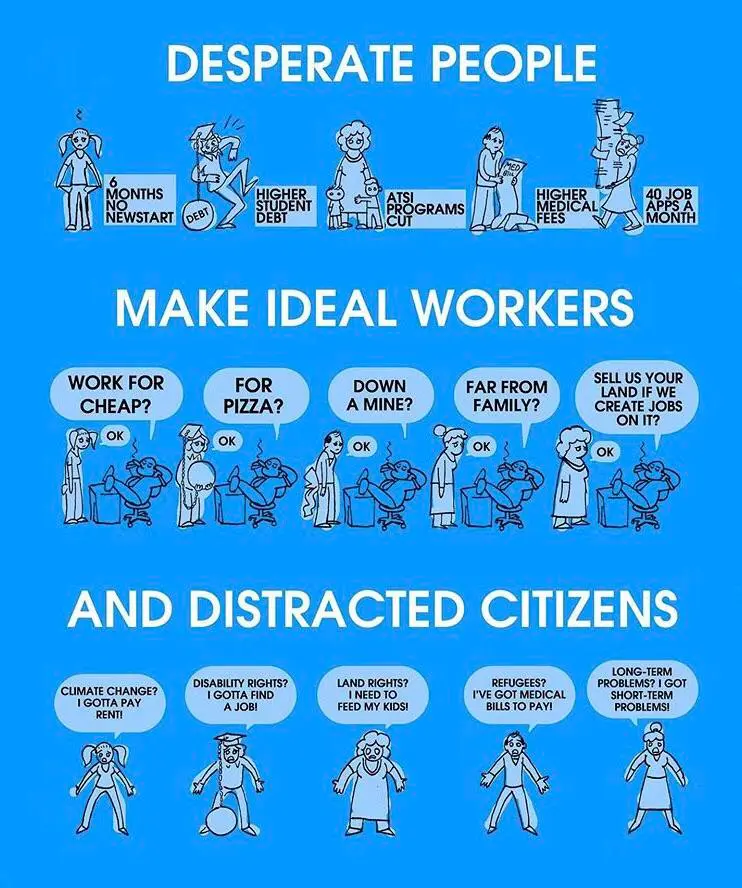

92·1 year agoAt some point in the last decade, the

ostensiveostensible goal of automation evolved from savings us from unwanted labor to keeping us from ever doing anything.

141·1 year ago

141·1 year agoThe goal of every company is to do shenanigans at the top while profiting off an underclass of laborers at the bottom. The more shenanigans they can do to squeeze the underclass harder, the better. Uber et al are genuine innovators in automating labor law violations to maximize that squeeze. Looks like they’re expanding from chauffeurs to every other kind of household servant. Awesome. This will be very cool and fine.

2·1 year ago

2·1 year agodeleted by creator

You can tell that technology is advancing rapidly because now you can type short-form text on the internet and everybody can read it. Truly innovative stuff.