I’ve been play around with ollama. Given you download the model, can you trust it isn’t sending telemetry?

Deny it internet access

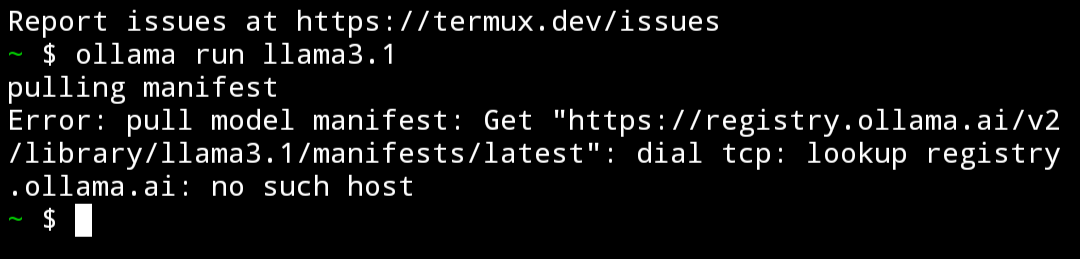

Just tried that. Would appear it doesn’t work if you can’t check for an update. Can’t figure out how to disable updates as I’m not that big into terminal use and can’t find much online :/

I think you can point to a file instead too

Llama does have some details on GitHub about this. According to them, no, it does not send data anywhere. You can also monitor network traffic to watch for it sending anything it shouldn’t. If you don’t want to do that, firewall it.

And if you don’t want to do that…run it in a VM and unplug your nic/disconnect wifi

LLMs are not different from any other type of software. Is there a privacy policy you can refer to? Can you monitor the network to see of it is trying to communicate with anything? If you want to be sure, just block internet access. This seems to be open-source so you could also just search for the phone-home code and remove it if it exists.

you can check the process to see if its communicating at all. none of the big ones do. its possible someone could be fucking with the file though, before the safetensors format this was a big issue, and still sort of is afterwards. only DL from reputable sources

Can’t you run if from a container? I guess the will slow it down, but it will deny access to your files.

Containers don’t really slow down apps significantly. It’s not a VM, it’s still a native app running in your kernel, just on a separate memory space and restricted access to hardware.

That is true for Linux and maybe Mac, but on windows I think they have a bit more overhead. But again I agree that in most cases it is not significant.

Is the overhead because of containers or is it because you’re running something that is meant to run on Linux and is using a conversion layer like MinGW ?

Windows > Windows Subsystem for Linux (WSL) Ubuntu > docker container

I think WSL 2 actually runs Linux in a virtual environment. I’ve tried getting my own LLM instance running on my windows machine but it’s been such a pain.

yeah you could. though i dont see any evidence that the large open source llm programs like jan.ai or ollama are doing anything wrong with their program or files. chucking it in a sandbox would solve the problem for good though

You could use “Alpaca” flatpak and remove the internet access with flatseal after having downloaded the model. (Linux)

Or deny the app’s access to internet in app settings. (Android)

I found this and actually like it better than Alpaca. Your mileage may vary, but they claim that it’s 100% private, no internet necessary:

It’s nice but it’s hard to load unsupported models sadly. Really wish you could easily sideload but it’s nice unless you have a niche usecase.

I’m not sure what you mean by ‘hard to load’. You find the model you want, you download it, then load it up to chat. What’s the issue you’re having?

At parents for the week but IIRC I had some sort of incompatible extension with a model I downloaded off huggingface

It’s not the model that would be sending telemetry - it’s the runtime that you load it up in. Ollama is open source wrapper for llama.cpp, so (if you have enough patience) you could inspect the source code to be sure. Regarding running it in sandbox: you could, and generally it does not add any tangible overhead to the tokens per second performance, but keep in mind that in order to give the model runtime (ollama, vllm and the like) access to your GPU you usually need some form of sandbox concessions like PCIe passthrough for VMs or running nvidia’s proprietary container runtime plugin. From my measurements, there is zero difference in performance when running a model loaded in a GPU on baremetal, a docker container with nvidia container runtime or a proxmox VM with PCIe passthrough. Model executes on GPU itself and barely uses any CPU (sampling and loras are usually CPU operations). vLLM does collect anonymized usage stats. Since it’s open source - you can actually see what’s being sent (spoiler: it’s pretty boring). As far as I know, Ollama has nothing like that. None of the open source engines that I know of are sending your full prompts or responses anywhere though. It doesn’t mean they will keep being like that forever or that you should be less vigilant though 👍

The only real way of checking is by checking the send packets and/or inspect the source code. This “problem” is not only related to local AI but open source are too

The models can’t do anything the inference library doesn’t allow for. So you shouldn’t need to worry about a rogue model if you’re loading it somewhere someone you trust can vouch for. If you’re worried about Ollama, you can monitor its network usage (and block it in your firewall). There shouldn’t be any network activity if you disable auto-update.

For OP, download wireshark and see if any new connections spring up when you run it.